Operational Bottlenecks

Every rule change — no matter how minor — required developer intervention. Marketing waited days for campaigns that should have launched in hours. Engineering was firefighting instead of building.

Rewards Rule Engine · Retention Strategy · Enterprise SaaS

How I led an end-to-end redesign that reduced campaign deployment time by 75%, eliminated 90% of manual errors, and gave non-technical teams full ownership of a previously developer-gated workflow.

The Rewards Rule Engine powered our entire loyalty ecosystem — automating how incentives were triggered, stacked, and distributed. It worked. But it was a black box: opaque to stakeholders, inaccessible to growth teams, and entirely dependent on engineering for even the simplest campaign changes.

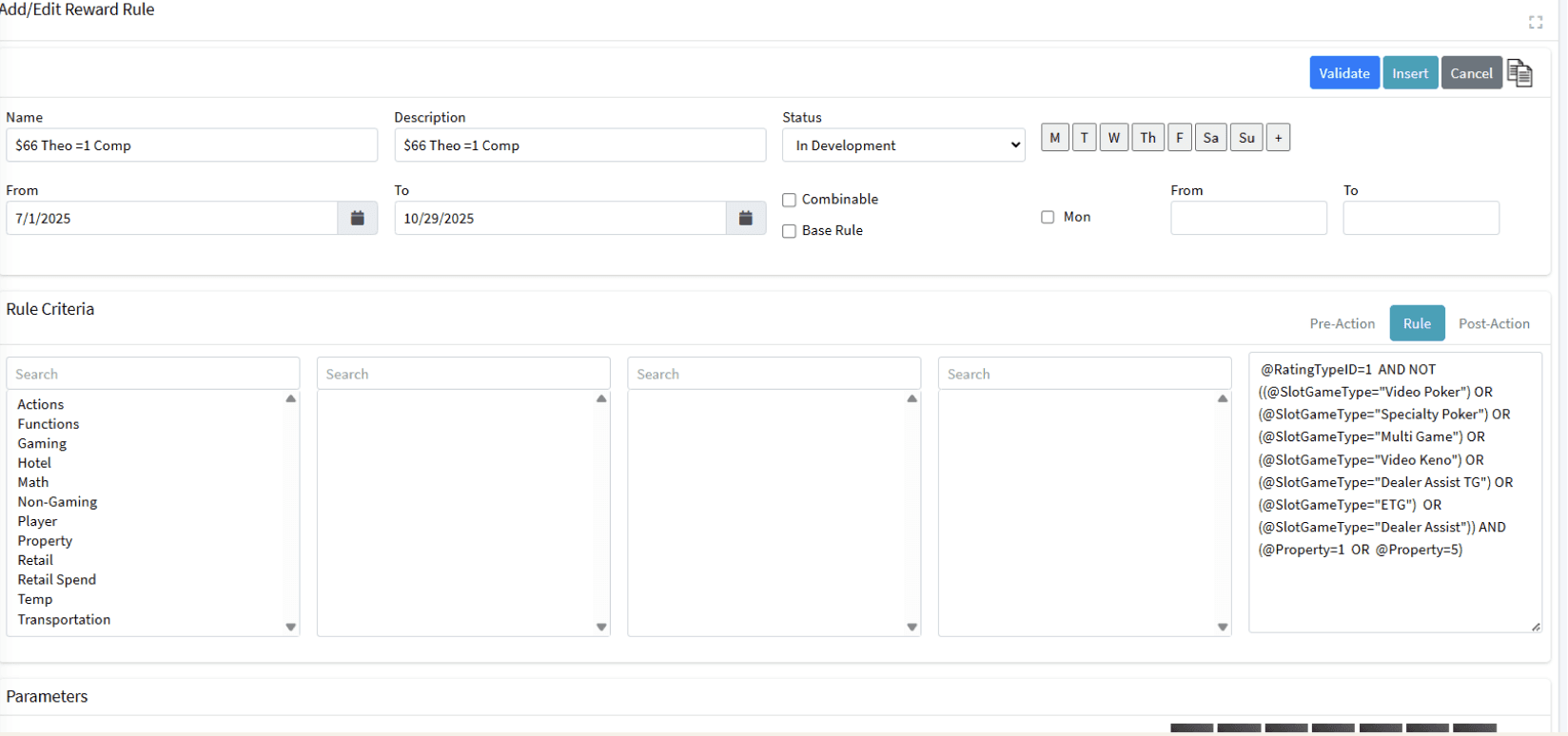

For a tool designed to reward users, the experience of creating a rule felt like punishment. The system was built for engineers, not for the growth teams who actually needed to move fast.

— Pooja Kudesia, UX Lead

This wasn't just a UX problem — it was a business velocity problem. Every campaign change required developer time. Every logic conflict surfaced after launch. Every new reward type needed months of backend rearchitecting. I reframed the brief: not "redesign the interface" but redesign the system so non-technical teams could own the entire campaign lifecycle.

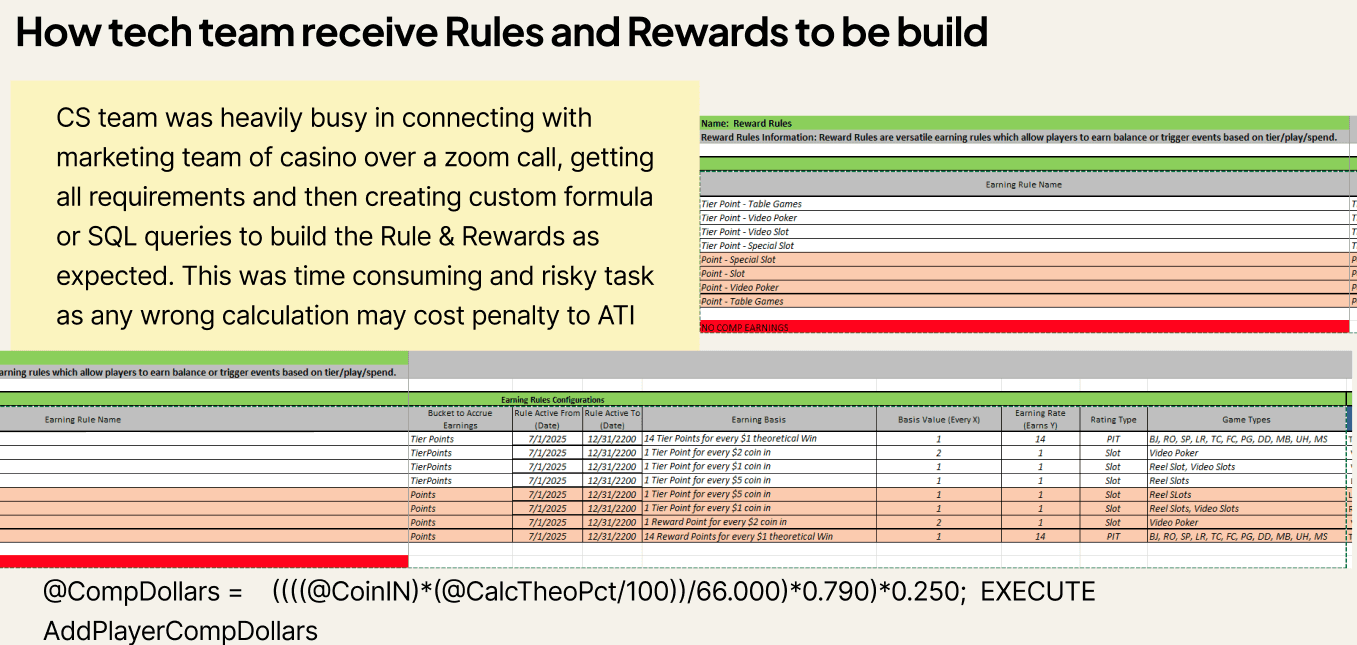

Before scoping a solution, I led a cross-functional diagnostic to understand the true cost of the status quo.

Every rule change — no matter how minor — required developer intervention. Marketing waited days for campaigns that should have launched in hours. Engineering was firefighting instead of building.

Stakeholders had zero visibility into how rewards were triggered or resolved. When campaigns underperformed, nobody could diagnose why. The black-box nature eroded confidence in the data it produced.

As the business grew, the rigid architecture couldn't accommodate new reward types — points, cashback, partner vouchers. Every new format required custom backend work. The system was a ceiling, not a platform.

My approach was diagnostic before it was generative. I built shared understanding across stakeholders before a single wireframe was drawn.

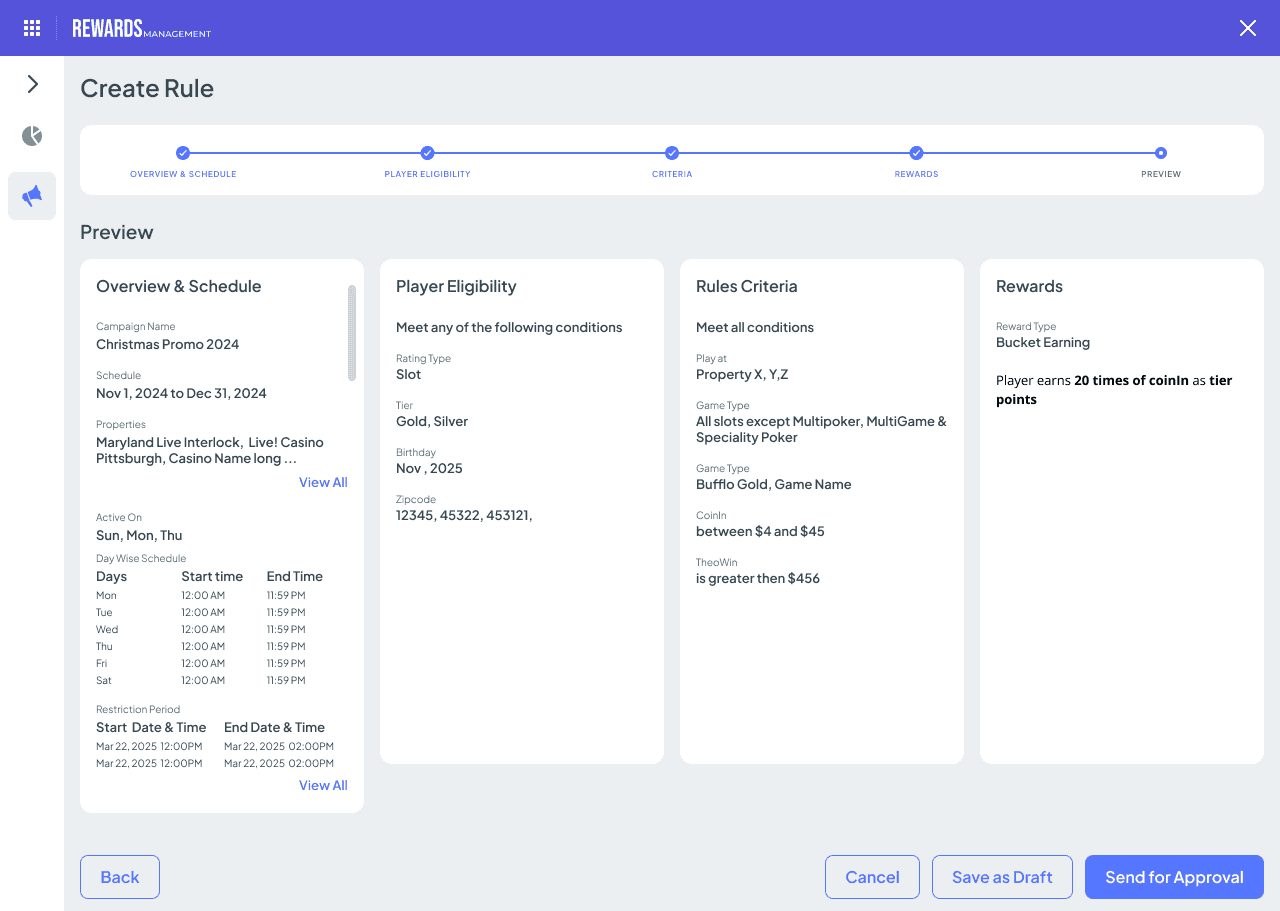

I initiated a structured audit involving Marketing, Product, and Data Engineering — creating a shared language for the problem before any solutions were proposed. This prevented the redesign from being perceived as a UI facelift and positioned design as a strategic driver of operational change.

I personally led interviews asking participants to walk me through their last 3 campaign attempts — mapping friction, workarounds, and failure points in real time rather than asking them what they wanted.

Rather than designing for edge cases, we analysed historical data to identify the most frequent rule combinations. This gave us a principled basis for prioritisation: build the modular builder around the 20% of patterns covering 80% of real-world use cases.

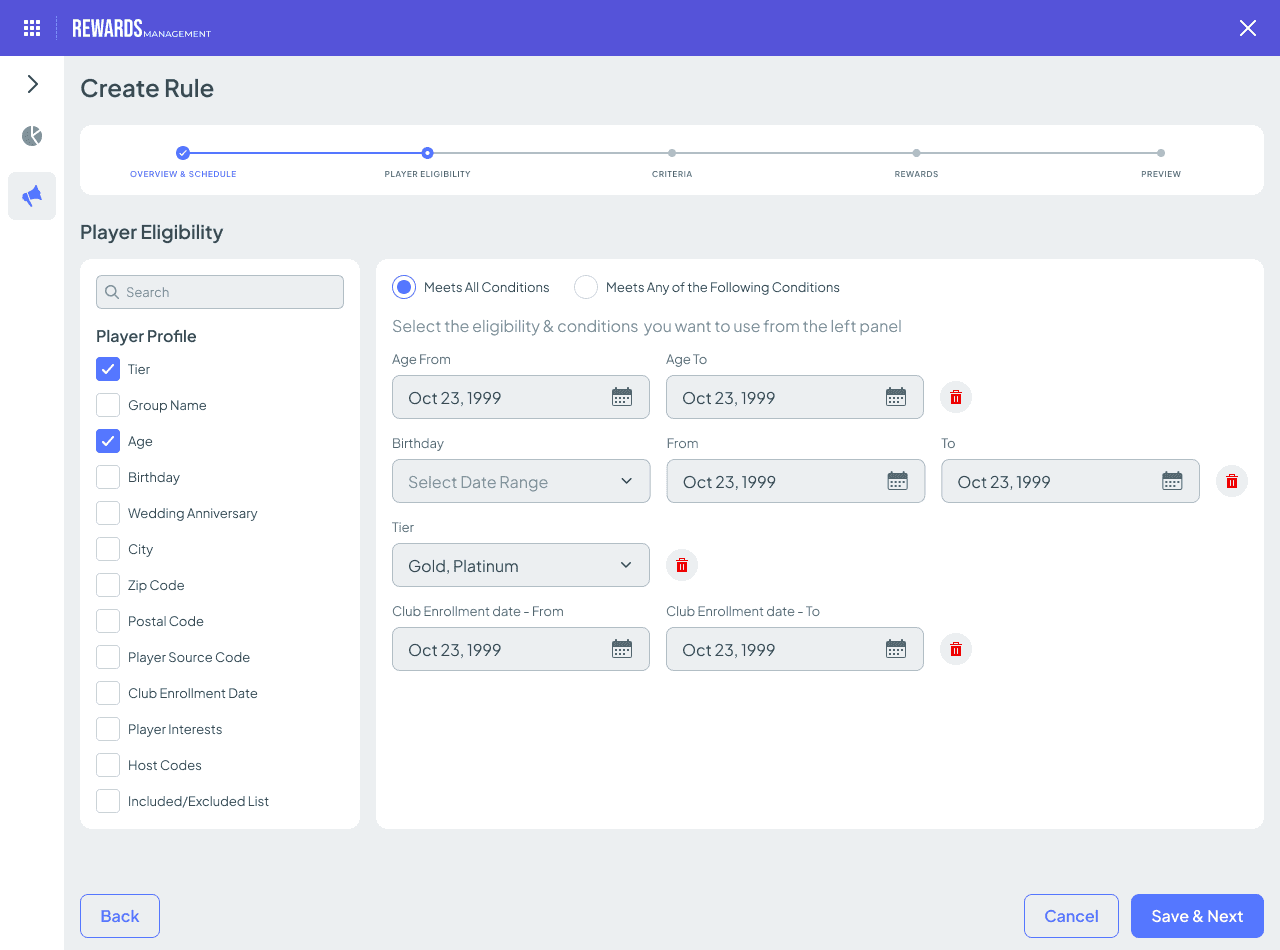

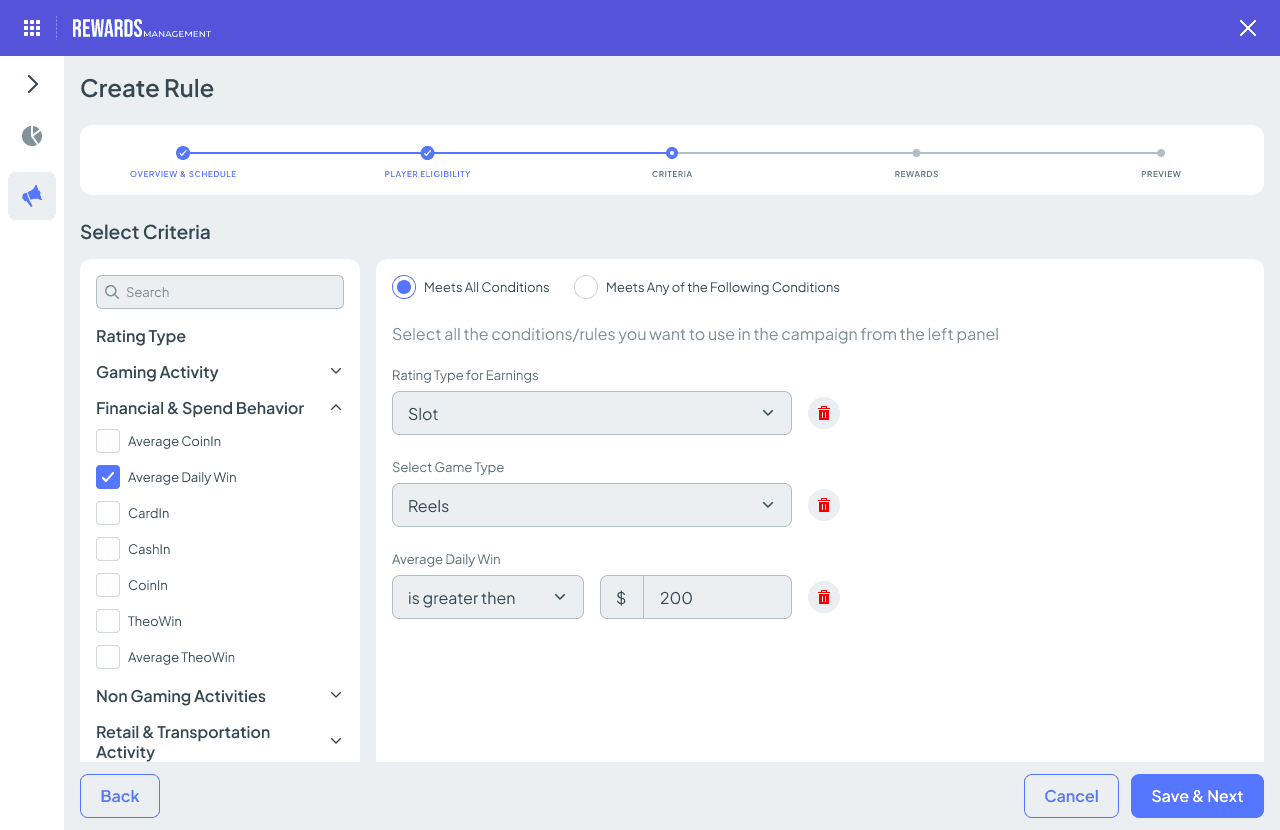

I designed and facilitated multiple rounds of concept testing — specifically to stress-test the logic-building experience. We tested whether non-technical users could correctly configure complex rule stacks without introducing conflicts. The fail-safe validation system emerged directly from observing users in early prototypes.

I embedded with Data Engineering throughout the build — not to dictate implementation, but to ensure the modular design system was technically sustainable. The resulting component architecture enabled future reward types to be added without redesign.

I reframed the project around three strategic objectives aligned to business KPIs — not just usability metrics. This framing secured cross-functional buy-in and executive sponsorship.

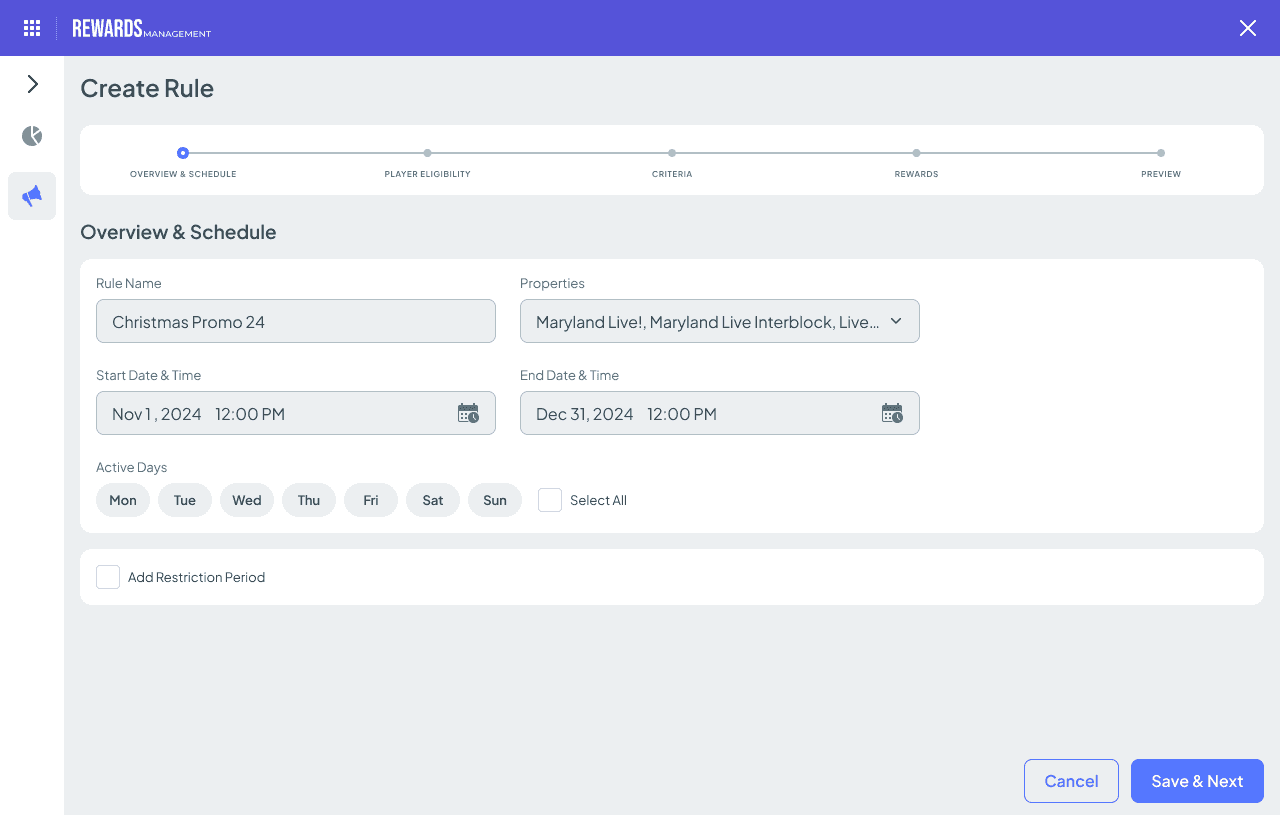

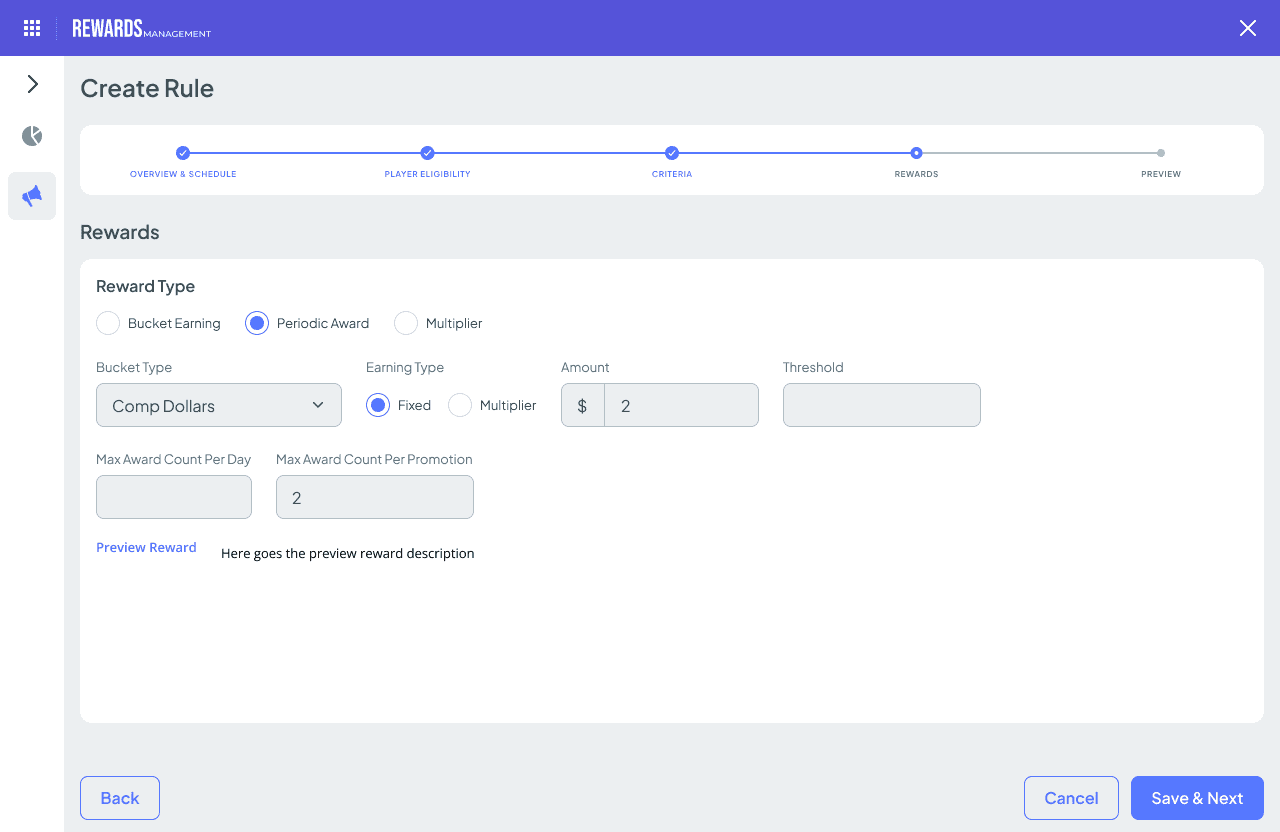

Enable non-technical Marketing teams to independently launch campaigns within 1 hour — removing engineering from the critical path entirely.

Build a predictive validation layer that prevents conflicting reward rules from going live simultaneously — eliminating the root cause of 60% of campaign failures.

Increase campaign frequency by removing the bottleneck — targeting a 40%+ increase in targeted campaign launches per quarter.

These aren't just UX metrics — each outcome maps directly to a business lever: operational cost, revenue velocity, data reliability, and platform longevity.

−75%

Reduced from 4 days to under 1 hour. Marketing can now respond to market events in real time — a capability that didn't exist before.

−90%

Predictive logic validation catches conflicts before they go live — eliminating the root cause of the majority of historical campaign failures.

100%

Marketing now owns the full end-to-end campaign lifecycle. Engineering was entirely removed from the operational path for routine campaign work.

10×

The modular architecture and intuitive builder drove a 10-fold increase in active usage — from a tool people tolerated to one they depended on.

The original ask was to "clean up the interface." I reframed it as a strategic opportunity to unlock business velocity. That reframe — and the ability to articulate it in business terms — was what secured executive sponsorship and cross-functional participation.

The 60% statistic — that most failures were UI-induced logic conflicts, not technical bugs — didn't just inform the design. It changed how engineering and product thought about the problem. Research presented well is the most persuasive thing a design leader has.

The modular architecture wasn't a UX decision — it was a business continuity decision. By designing the component system to be extensible, we avoided a rewrite cycle every time a new reward type was introduced. That's the difference between solving today's problem and building tomorrow's platform.

Giving non-technical teams full ownership of a previously gated workflow is a business transformation, not just a usability win. When 100% of campaigns can be launched without engineering, you've fundamentally changed what's possible for the business.

I'm open to Design Director, UX Director, and Head of UX opportunities.